-

Type:

Improvement

-

Resolution: Fixed

-

Priority:

Major - P3

-

Affects Version/s: None

-

Component/s: Connections, Performance

-

None

-

Not Needed

-

-

None

-

None

-

None

-

None

-

None

-

None

Problem Description

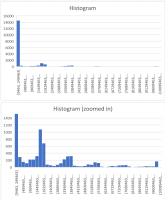

Currently using multi-threading with more threads than Mongo connections in the pool causes a much greater number of Mongo::Error::ConnectionCheckOutTimeout errors than expected. I haven't investigated in detail, but I suspect the connection pool currently doesn't seem to serve queries in a first-in-first-out (FIFO) / round-robin fashion across threads. Some threads execute queries repeatedly, while others don't execute at all, causing Mongo::Error::ConnectionCheckOutTimeout

Why This Matters

This issue makes it nearly impossible to use multi-threaded processing with more threads than the connection pool in a production app. For example, I use a multi-threaded job for mass-sending emails. It will also cause issues with the recent async query mode added to Mongoid.

Example

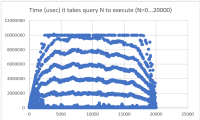

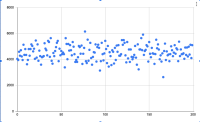

Given the following:

- 10 second wait timeout (default)

- Connection pool with 5 connections

- 200 threads doing queries in a loop

- Average of 10ms per database query

In this case, I expect each thread will wait an average of 200 / 5 * 10ms = 400ms between query executions. I would only expect to 10-sec pool timeouts if queries are taking avg 250ms, which is a very long time for a query.

This however is not what we observe. Instead, it appears some threads are never served by the pool and get timeouts, while other threads "cut" in the queue.

Here's a Mongoid script which reproduces the issue:

# set max_pool_size to 5 in mongoid.yml threads = 200.times.map do |i| Thread.new do j = 0 loop do Mongo::QueryCache.uncached do Person.all.first rescue StandardError => e puts "\nThread #{i} > #{e}" end print '.' if j % 30 == 0 j += 1 end end end threads.each(&:join)

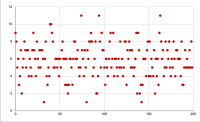

For clarity, here is a timing diagram with 5 connections and 10 threads that illustrates how the queuing should work:

O = actively using connection

| = query finished and connection returned to pool . = waiting in queue |

Thread: Time ->

0: OO|...OO|...OO

1: OO|...OO|...OO

2: OO|...OO|...OO

3: OOO|...OO|...O

4: OOO|....OO|...

5: ...OO|...OO|..

6: ...OO|...OO|..

7: ...OO|...OO|..

8: ....OO|...OO|.

9: ....OOO|...OO|

And here is what I think may be happening:

Thread: Time ->

0: OO|OO|OO|OO|OO

1: OO|.OO|..OO|OO

2: OO|OO|OO|....O

3: OOO|...OO|..OO

4: OOO|OO|OO|OO|.

5: ...OO|...OO|.O

6: ......OO|.....

7: ..........OO|.

8: ..............

9: ..............