Description:

Found out that a special sequence of event may reduce server throughput by 90% for around 10min. So far, the trigger seems drop a collection while there is concurrent oplog tailing going on.

Test setup

- single node replica set with wiredTiger

- use a special written tool to tail oplog with 32 or 64 thread (run from a separate server)

- use bench run run simple insert into multi collection, step of the test

- drop all collection

- use benchRun do simple insert

- check throughput with mongostat

Observation:

The throughput will drop from 70k to 600, and this will last for up to 10min (after stop tailing oplog)

MongoDB shell version: 3.0.0-rc8

connecting to: test

{ "name" : "wiredTiger" }

0: 79966.63852879952 <-- every test last 10 second

1: 73956.35971517103

2: 70263.89507592177

3: 76272.46177347556

4: 76179.7331941972

5: 76071.29258252526

6: 76504.322290524

7: 77133.12436735495

8: 76096.40869079733

9: 600.3870288152949 <--- the drop

10: 585.1938886069264 <-- stop tailing oplog

11: 587.1715686099094

.....

67: 569.518186223205

68: 550.9203010903097

69: 401.6865724762767

70: 74848.28307466969 <--- recover, drop last for 600sec

71: 76152.44327463405

- this also happen with smaller thread number, much easier to repro with higher thread count (tailing oplog)

- could not reproduce this with mmapv1

- tested with RC8

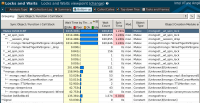

perf topN

- 12.37% mongod mongod [.] __rec_row_leaf_insert

- __rec_row_leaf_insert

- __rec_row_leaf

- __wt_reconcile

- 99.30% __wt_cache_op

- 98.54% __wt_checkpoint

- 98.58% __wt_txn_checkpoint

__session_checkpoint

__ckpt_server

start_thread

+ 1.42% __checkpoint_apply

+ 1.46% __wt_txn_checkpoint

+ 0.70% __evict_review

- 10.84% mongod mongod [.] __config_getraw.isra.

- __config_getraw.isra.0

- 54.82% __wt_config_getones

- 63.83% __wt_schema_open_table

__wt_schema_get_table

__wt_schema_drop

__session_drop

mongo::WiredTigerKVEngine::dropAllQueued()

mongo::WiredTigerSessionCache::releaseSession(mongo::WiredTigerSession*)

mongo::WiredTigerRecoveryUnit::~WiredTigerRecoveryUnit()

+ mongo::WiredTigerRecoveryUnit::~WiredTigerRecoveryUnit()

- 27.61% __wt_schema_open_colgroups

__wt_schema_open_table

__wt_schema_get_table

__wt_schema_drop

__session_drop

mongo::WiredTigerKVEngine::dropAllQueued()

mongo::WiredTigerSessionCache::releaseSession(mongo::WiredTigerSession*)

mongo::WiredTigerRecoveryUnit::~WiredTigerRecoveryUnit()

+ mongo::WiredTigerRecoveryUnit::~WiredTigerRecoveryUnit()

+ 8.47% __wt_config_gets_def

- 44.87% __wt_config_get

- 98.94% __wt_config_gets

- 71.56% __wt_cursor_init

__wt_curfile_create

__wt_metadata_cursor

__wt_metadata_update

__wt_meta_track_off

__wt_schema_drop

__session_drop

mongo::WiredTigerKVEngine::dropAllQueued()

mongo::WiredTigerSessionCache::releaseSession(mongo::WiredTigerSession*)

mongo::WiredTigerRecoveryUnit::~WiredTigerRecoveryUnit()

+ mongo::WiredTigerRecoveryUnit::~WiredTigerRecoveryUnit()

- 15.69% __wt_curfile_create

__wt_metadata_cursor

__wt_metadata_update

__wt_meta_track_off

__wt_schema_drop

__session_drop

mongo::WiredTigerKVEngine::dropAllQueued()

mongo::WiredTigerSessionCache::releaseSession(mongo::WiredTigerSession*)

mongo::WiredTigerRecoveryUnit::~WiredTigerRecoveryUnit()

+ mongo::WiredTigerRecoveryUnit::~WiredTigerRecoveryUnit()

+ 12.62% __wt_schema_drop

+ 1.06% __wt_config_collapse

Setup

- rc8

"version" : "3.0.0-rc8", "gitVersion" : "9d0714cdcffadb203ff68730acedbc40875403da", - parameters

"--dbpath=/data/ssd-sdc/dbs-wt", "--logpath=/data/ssd-sdc/logs/mongod.log", "--fork", "--replSet", "r", "--storageEngine=wiredTiger"