-

Type:

Bug

-

Resolution: Duplicate

-

Priority:

Critical - P2

-

None

-

Affects Version/s: 3.4.2

-

Component/s: None

-

None

-

ALL

-

-

None

-

None

-

None

-

None

-

None

-

None

-

None

Every night, we do an aggregation, followed by a bulk insert, followed by a rename with the "drop target option".

Without fail, roughly one second (the time it takes for the rename to propagate to the secondary) after the rename completes, our secondary goes completely haywire and performance goes out the window. Performance does not recover unless we restart the secondary.

This behavior did not occur until we upgraded from 3.2.10 to 3.4.2

Once the performance drops, every couple of seconds we'll see hundreds of queries piling up for very simple queries. They all block and then complete simultaneously. It looks as though it's some sort of concurrency issue.

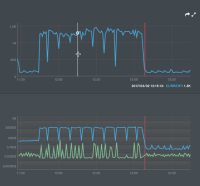

+Included are some graphs and logs that illustrate the problem. +

Server restarted at 22:00 and aggregation happened at 00:00:

Here are some very simple queries taking over 6 seconds to complete:

2017-03-02T16:02:32.034+0000 I COMMAND [conn1328] command thinkmap.gamequestion command: find { find: "gamequestion", filter: { _id: 163258 }, projection: { workflow-writing: 0, votes: 0, ag: 0 }, limit: 1, singleBatch: true } planSummary: IDHACK keysExamined:1 docsExamined:1 cursorExhausted:1 numYields:1 nreturned:1 reslen:740 locks:{ Global: { acquireCount: { r: 4 }, acquireWaitCount: { r: 2 }, timeAcquiringMicros: { r: 6288267 } }, Database: { acquireCount: { r: 2 } }, Collection: { acquireCount: { r: 2 } } } protocol:op_query 6356ms

2017-03-02T16:02:32.034+0000 I COMMAND [conn1289] command thinkmap.gamequestion command: find { find: "gamequestion", filter: { _id: 300683 }, projection: { workflow-writing: 0, votes: 0, ag: 0 }, limit: 1, singleBatch: true } planSummary: IDHACK keysExamined:1 docsExamined:1 cursorExhausted:1 numYields:1 nreturned:1 reslen:642 locks:{ Global: { acquireCount: { r: 4 }, acquireWaitCount: { r: 2 }, timeAcquiringMicros: { r: 6378585 } }, Database: { acquireCount: { r: 2 } }, Collection: { acquireCount: { r: 2 } } } protocol:op_query 6443ms

2017-03-02T16:02:32.034+0000 I COMMAND [conn1166] command thinkmap.gamequestion command: find { find: "gamequestion", filter: { _id: 131553 }, projection: { workflow-writing: 0, votes: 0, ag: 0 }, limit: 1, singleBatch: true } planSummary: IDHACK keysExamined:1 docsExamined:1 cursorExhausted:1 numYields:1 nreturned:1 reslen:526 locks:{ Global: { acquireCount: { r: 4 }, acquireWaitCount: { r: 2 }, timeAcquiringMicros: { r: 6555928 } }, Database: { acquireCount: { r: 2 } }, Collection: { acquireCount: { r: 2 } } } protocol:op_query 6623ms

2017-03-02T16:02:32.034+0000 I COMMAND [conn1369] command thinkmap.gamequestion command: find { find: "gamequestion", filter: { _id: 212580 }, projection: { workflow-writing: 0, votes: 0, ag: 0 }, limit: 1, singleBatch: true } planSummary: IDHACK keysExamined:1 docsExamined:1 cursorExhausted:1 numYields:1 nreturned:1 reslen:683 locks:{ Global: { acquireCount: { r: 4 }, acquireWaitCount: { r: 2 }, timeAcquiringMicros: { r: 6193103 } }, Database: { acquireCount: { r: 2 } }, Collection: { acquireCount: { r: 2 } } } protocol:op_query 6257ms