-

Type:

Bug

-

Resolution: Fixed

-

Priority:

Major - P3

-

Affects Version/s: None

-

Component/s: None

-

None

-

Fully Compatible

-

ALL

-

Platforms 2017-09-11, Platforms 2017-10-02

-

None

-

None

-

None

-

None

-

None

-

None

-

None

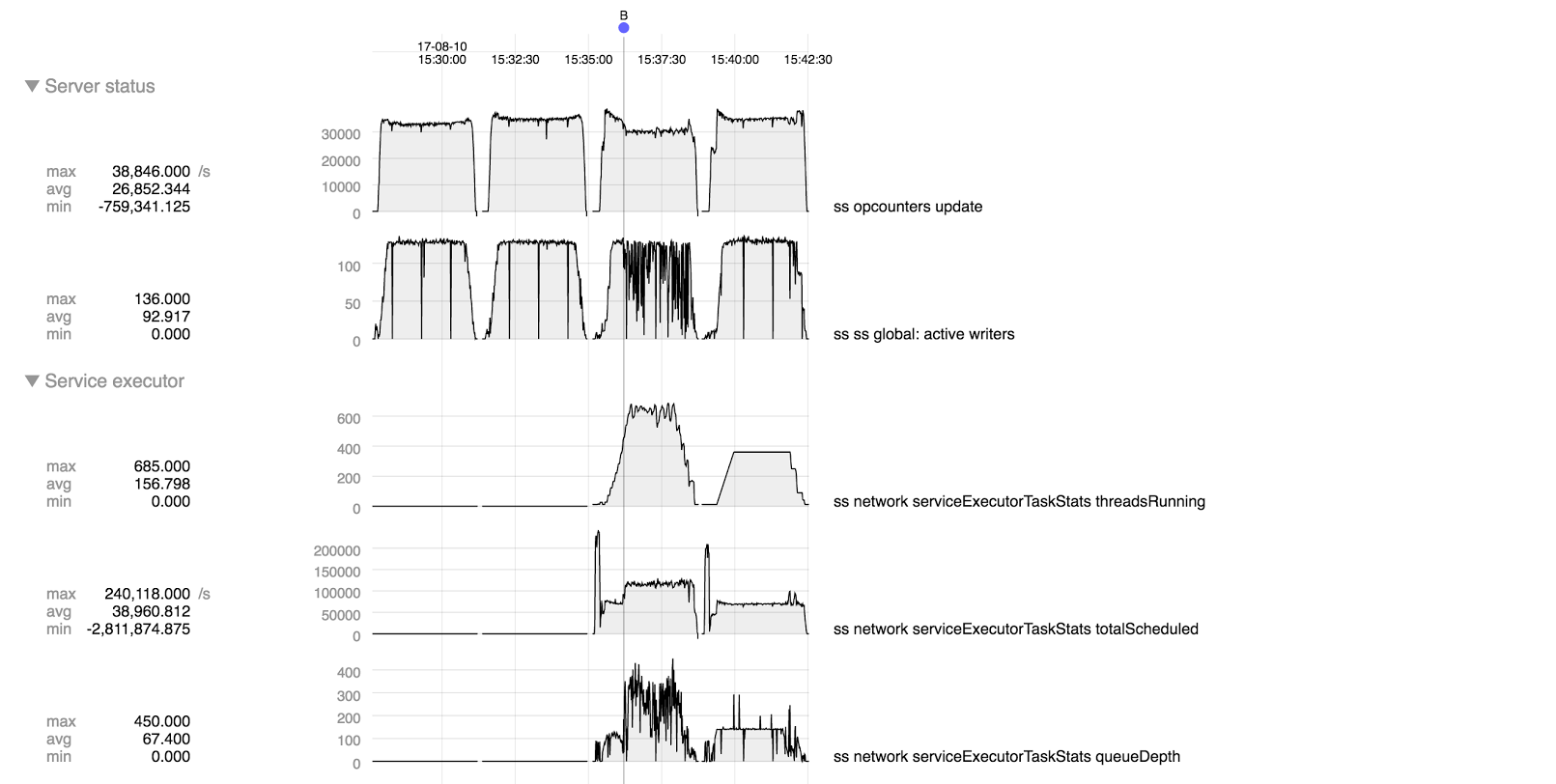

After eliminating the impact of markThreadIdle (see SERVER-30609) we see some additional issues with the adaptive service executor. These appear to be related so covering both for now in one ticket.

The four runs are

- *synchronous, 3.5.11*

- *synchronous, 3.5.11 with markThreadIdle eliminated.* This shows that eliminating markThreadIdle gives a small performance boost even for the synchronous case in this test, so using this as a basis for comparison for the adaptive tests.

- *adaptive, 3.5.11 with markThreadIdle eliminated.* After eliminating markThreadIdle we still see a performance deficit relative to synchronous, excessive threads (600-700 threads for only 500 connections), and a performance drop associated with increased calls to schedule at B. (Note: ignore the burst of calls to schedule at the beginning of each run; these were associated with the inserts to set up the data at the beginning of the run).

- *adaptive, 3.5.11 with markThreadIdle eliminated, plus exp.diff.* Experimental patch (attached) that eliminates (apparently) unnecessary calls to schedule has fixed those problems: performance is comparable to the synchronous case (exceeding it slightly at low thread count), number of threads is well-behaved, never exceeding 350, rate of calls to schedule stays steady at about 2x rate of operations.

My suspicion is that the unnecessary calls to schedule were fooling the adaptive executor into thinking it needed more threads than it actually did.

Test program attached. Run as follows:

# compile mjar=$(ls mongo-java-driver-*.jar) mkdir -p load javac -cp $mjar -Xlint:deprecation load.java -d load # run java -cp load:$mjar load host=... op=ramp_update docs=1000000 threads=500 tps=10 qps=1000 dur=150 size=100 updates=10

This

- initializes with 1000000 docs

- ramps up the load to 500 threads at 10 new threads per second

- attempts up to 1000 queries per second on each thread

- runs each thread for 150 seconds

- document size is 100

- 10 updates per query

Test runs above had one 24-cpu machine for mongod and another for the java client.