-

Type:

Bug

-

Resolution: Done

-

Priority:

Major - P3

-

None

-

Affects Version/s: 3.6.3, 3.6.4

-

Component/s: Storage

-

ALL

-

Storage Non-NYC 2018-06-04

-

None

-

None

-

None

-

None

-

None

-

None

-

None

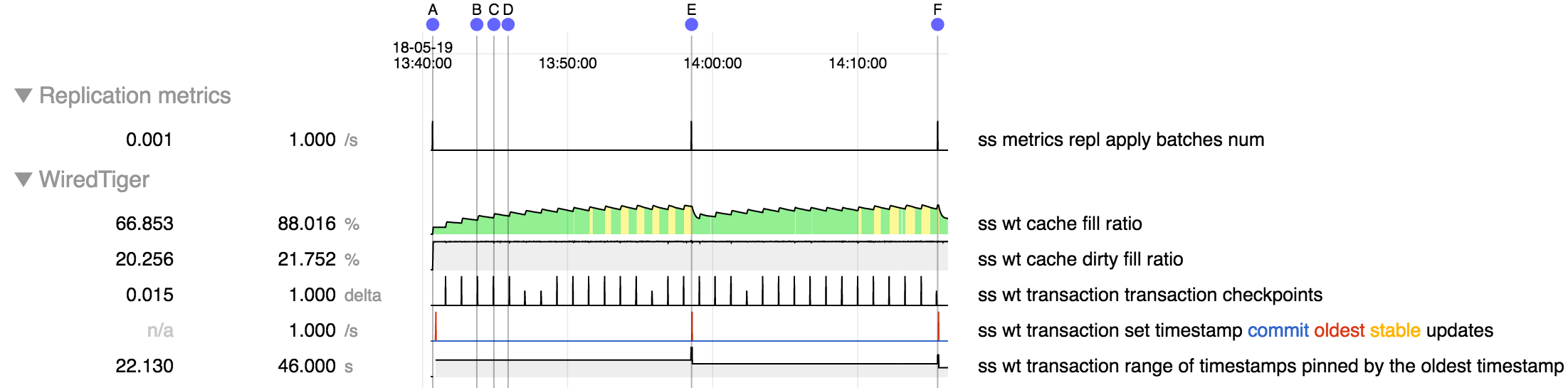

Attached repro script does the following

- Inserts 1M documents with 10 indexes over fields that are random strings

- Stops one secondary then removes all documents via one of the indexes, therefore in random order.

- Restarts that secondary. While it is catching up (after the script completes), due to

SERVER-34938, batches will be large and take several minutes to complete, so multiple checkpoints will run while timestamps are pinning content in cache. It appears that additional unevictable clean content is generated at each checkpoint, exacerbating the effects ofSERVER-34938.

- batch boundaries are at A, E, F

- at each checkpoint (e.g B, C, D) the amount of clean content is increased, and this is presumably unevictable because the oldest timestamp is only updated between batches

- at batch boundaries when oldest timestamp is advanced the cache content is reduced, presumably because it becomes evictable

Above was run on a machine with 24 cpus and a lot of memory, but the script uses numactl to limit each instance to two cpus and cache to 4 GB (during setup) and 1 GB (during recovery), so this should be runnable on a more modest machine.

- is related to

-

SERVER-33191 Cache-full hangs on 3.6

-

- Closed

-

-

SERVER-34938 Secondary slowdown or hang due to content pinned in cache by single oplog batch

-

- Closed

-