-

Type:

Bug

-

Resolution: Fixed

-

Priority:

Major - P3

-

Affects Version/s: 4.2.1

-

Component/s: WiredTiger

-

Fully Compatible

-

ALL

-

Storage Engines 2019-12-16, Storage Engines 2019-12-30, Storage Engines 2020-01-13

-

(copied to CRM)

-

1

-

None

-

None

-

None

-

None

-

None

-

None

-

None

Test creates 5 collections with 10 indexes each. Indexes are designed to have keys with substantial common prefixes; this seemed to be important to generate the issue. Collections are populated, then are sparsely updated in parallel, aiming for roughly 1 update per page, in order to generate dirty pages at a high rate with little application work.

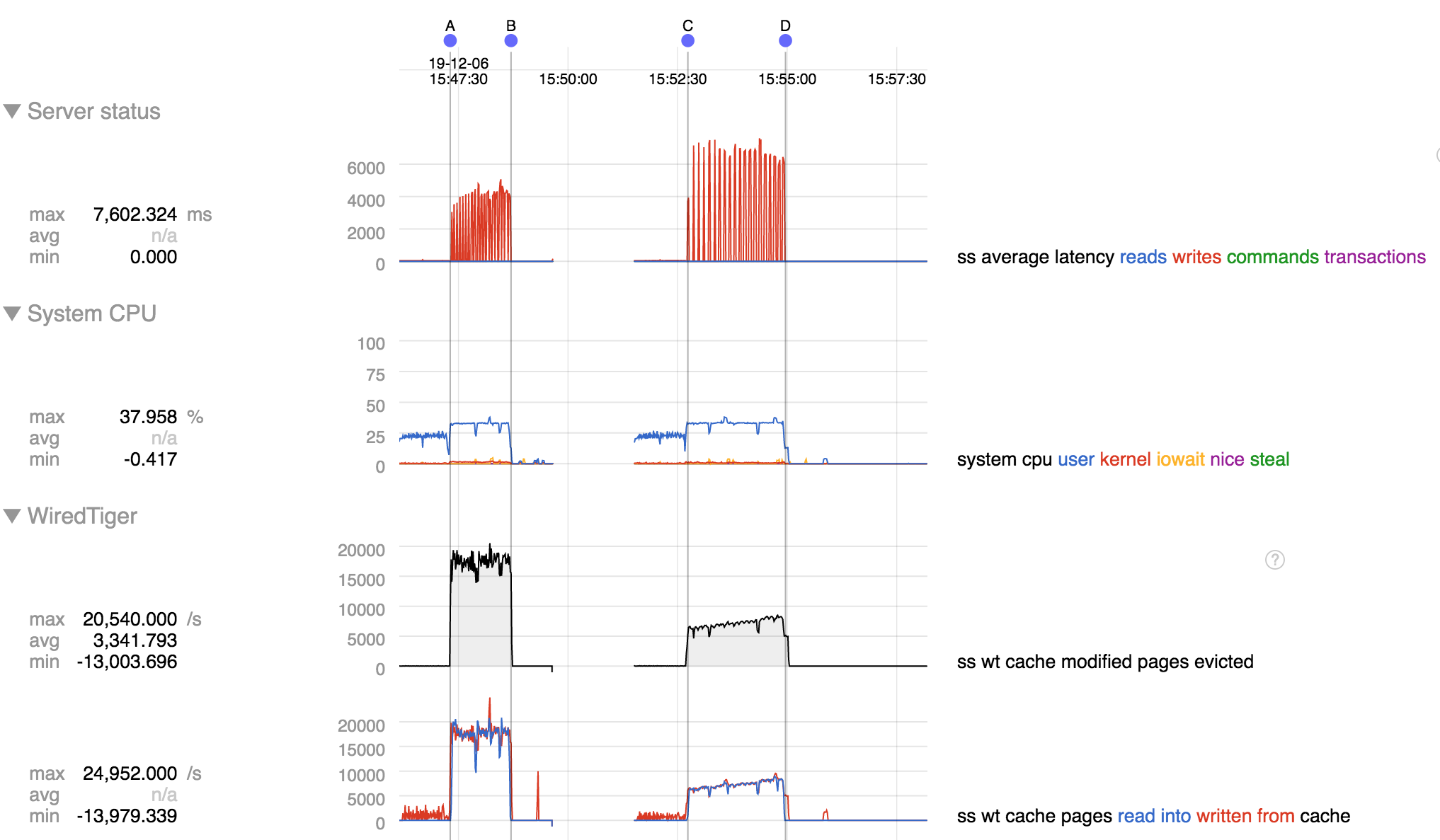

Left is 4.0.13, right is 4.2.1. A-B and C-D are the update portions of the test. The performance regression is seen in the timespan for the update portion, and in the average latency.

The rate of pages written to disk and pages evicted is substantially lower in 4.2.1. In this test dirty fill ratio is pegged at 20%, so the rate at which pages can be written to disk is the bottleneck.

The test is run on a 24-CPU machine, so the CPU utilization during both tests is roughly what would be expected with ~5 constantly active application threads, plus 3-4 constantly active eviction threads. But in spite of the same CPU activity for eviction, we are evicting pages at a much lower rate, so we must be using more CPU per page evicted in 4.2.1. Perf shows that this additional CPU activity is accounted for by __wt_row_leaf_key_work.

- depends on

-

WT-5319 Avoid clearing the saved last-key when no instantiated key

-

- Closed

-

- is duplicated by

-

SERVER-44752 Big CPU performance regression between 4.0.13 and 4.2.1 (on deletion it seems), about 16 times

-

- Closed

-

- is related to

-

SERVER-44948 High cache pressure in 4.2 replica set vs 4.0

-

- Closed

-