-

Type:

Question

-

Resolution: Community Answered

-

Priority:

Major - P3

-

None

-

Affects Version/s: None

-

Component/s: Sharding

-

None

-

Fully Compatible

-

None

-

None

-

None

-

None

-

None

-

None

-

None

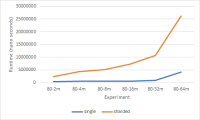

I ran a few experiments to compare sharding vs non-sharded mongo instances. I have limited the mongod memory to 256MB in both scenarios and disabled compression. I use a synthetic dataset of 80-byte documents and change the number of documents of the collections and collect the average runtime to retrieve a random document by id. In the sharded environment I have ranged sharding on the id and the data is equally distributed among the 3 servers (no replication). Here is the result that I got. (80-2m means 2,000,000 documents of 80 bytes)

As expected there is an overhead associated with sharding. However, my question is that shouldn't this overhead be a constant? why does the difference between the runtime of the sharded and the non-sharded instance is getting more with more documents? As far as I can see this overhead should be independent of the document counts.

I have attached all the logs of each experiment ( each collection used different db locations) of the config, mongods and the mongos. The configurations I used is also attached