-

Type:

Bug

-

Resolution: Done

-

Priority:

Major - P3

-

None

-

Affects Version/s: 4.4.0-rc7

-

Component/s: None

-

ALL

-

Storage - Ra 2022-01-24, Storage - Ra 2022-02-07, Storage - Ra 2022-02-21, Storage - Ra 2022-03-07, Storage - Ra 2022-03-21, Storage - Ra 2022-06-13

-

(copied to CRM)

-

0

-

None

-

None

-

None

-

None

-

None

-

None

-

None

Test inserts 100 k documents, removes them all, then looks for (the now non-existent) documents via the _id index.

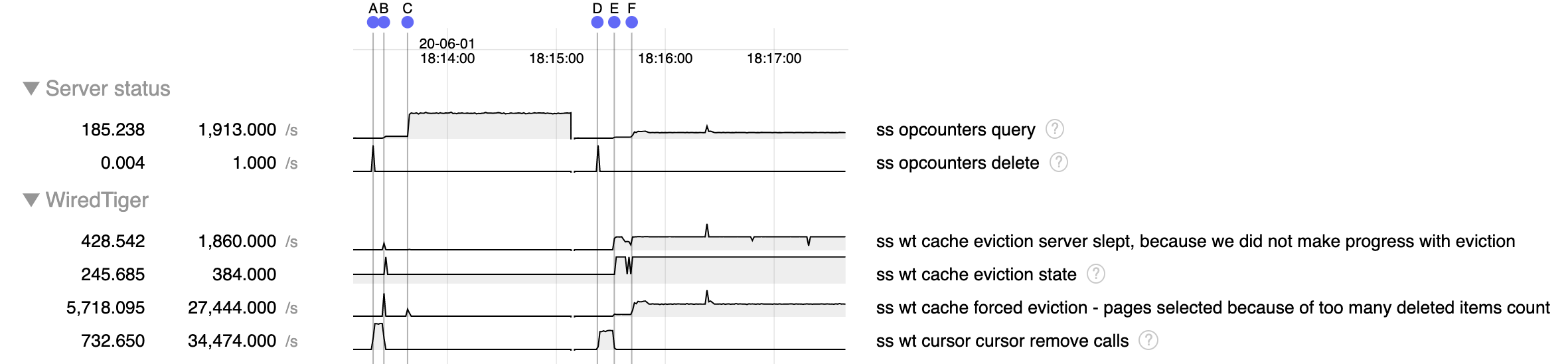

Left is 4.2.6, right is 4.4.0-rc7

- A-B, D-E: removing 100 k documents. There is a regression of about 30% in rate of deletion ("cursor remove calls")

- starting B, E: doing a find via the _id index for a non-existent document

- B-C: in 4.2.6 for a period of time the queries are slow, then at C the rate picks up, apparently having something to do with pages evicted due to too many deleted items

- E-F: in 4.4.0-rc7 the rate is also intially slow (albeit slower than in 4.2.6 at the same point), then picks up some at F, but remains much slower than in 4.2.6. Behavior of pages evicted due to too many deleted items is different - looks like we're trying but not able to evict them?

PMP shows most of our time here

#0 0x00005652ac555e4c in __wt_value_return_buf () #1 0x00005652ac5248b7 in __wt_txn_read () #2 0x00005652ac525bdf in __wt_btcur_next () #3 0x00005652ac53294a in __wt_btcur_search_near () #4 0x00005652ac484528 in __curfile_search_near () #5 0x00005652ac41695f in int mongo::wiredTigerPrepareConflictRetry<mongo::(anonymous namespace)::WiredTigerIndexCursorBase::seekWTCursor(mongo::KeyString::Value const&)::{lambda()#1}>(mongo::OperationContext*, mongo::(anonymous namespace)::WiredTigerIndexCursorBase::seekWTCursor(mongo::KeyString::Value const&)::{lambda()#1}&&) () #6 0x00005652ac417070 in mongo::(anonymous namespace)::WiredTigerIndexCursorBase::seekWTCursor(mongo::KeyString::Value const&) () #7 0x00005652ac41d212 in mongo::(anonymous namespace)::WiredTigerIndexCursorBase::seek(mongo::KeyString::Value const&, mongo::SortedDataInterface::Cursor::RequestedInfo) () #8 0x00005652ace06b22 in mongo::IndexScan::initIndexScan() () #9 0x00005652ace07817 in mongo::IndexScan::doWork(unsigned long*) ()

This one also showed up once

#0 0x00005652ac52331e in __wt_txn_upd_value_visible_all () #1 0x00005652ac526917 in __wt_btcur_next () #2 0x00005652ac53294a in __wt_btcur_search_near () #3 0x00005652ac484528 in __curfile_search_near () #4 0x00005652ac41695f in int mongo::wiredTigerPrepareConflictRetry<mongo::(anonymous namespace)::WiredTigerIndexCursorBase::seekWTCursor(mongo::KeyString::Value const&)::{lambda()#1}>(mongo::OperationContext*, mongo::(anonymous namespace)::WiredTigerIndexCursorBase::seekWTCursor(mongo::KeyString::Value const&)::{lambda()#1}&&) () #5 0x00005652ac417070 in mongo::(anonymous namespace)::WiredTigerIndexCursorBase::seekWTCursor(mongo::KeyString::Value const&) () #6 0x00005652ac41d212 in mongo::(anonymous namespace)::WiredTigerIndexCursorBase::seek(mongo::KeyString::Value const&, mongo::SortedDataInterface::Cursor::RequestedInfo) () #7 0x00005652ace06b22 in mongo::IndexScan::initIndexScan() () #8 0x00005652ace07817 in mongo::IndexScan::doWork(unsigned long*) ()

Repro: 3-node replica set

function repro() {

// insert 100 k documents

nthreads = 10

threads = []

for (var t=0; t<nthreads; t++) {

thread = new ScopedThread(function(t) {

size = 10

count = 10*1000;

every = 1000

const x = 'x'.repeat(size)

for (var i=0; i<count; i+=every) {

many = []

for (var j=0; j<every; j++)

many.push({_id: t*count+i+j, x})

db.c.insertMany(many)

if (i%10000==0) print(t, i)

}

}, t)

threads.push(thread)

thread.start()

}

for (var t = 0; t < nthreads; t++)

threads[t].join()

// remove them

print("removing")

db.c.remove({_id: {$lt: 100000}})

// loop trying to find non-existent document via _id index

print("finding")

while (true)

db.c.find({_id: {$lt: 100000}}).itcount()

}

- related to

-

SERVER-59776 50% regression in single multi-update

-

- Closed

-

-

WT-10424 cursor::search_near slow performance if many deleted items are present

-

- Closed

-

-

WT-5769 Search history store can potentially walk the whole history store tree

-

- Closed

-

-

WT-6349 Don't truncate history store updates for globally visible timestamped deletes

-

- Closed

-

-

SERVER-54939 Investigate secondary batching behavior in v4.4

-

- Closed

-