-

Type:

Bug

-

Resolution: Done

-

Priority:

Major - P3

-

Affects Version/s: 2.8.0-rc5

-

Component/s: Replication, WiredTiger

-

None

-

Fully Compatible

-

ALL

-

None

-

None

-

None

-

None

-

None

-

None

-

None

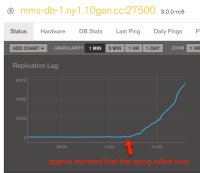

We converted one secondary to 2.8.0-rc5 at approximately 16:00 UTC on 2015-01-016. The throughput observed during the "oplog catch-up" phases of the initial sync was truly impressive!

The secondary continued to perform as expected until 2015-01-17 at approximately 11:00 UTC. At this point it seems that the throughput of the secondary dropped enough that it can no longer keep up with the 2.6.5 primary. The replication lag is increasing steadily, but in a linear fashion. Thus, the throughput dropped to X% of the primary load, but that X% has been holding steady since 11:00 - X does not seem to be decreasing.

There is nothing obvious in the logs (will attach anyway) other than a gradual increase in the frequency of "serverStatus was very slow" messages. serverStatus appears to be slow in the oplog phase. (Presumably, this is symptom, not cause, but mentioning anyway).

0 0 0

[cnelson@mms-db-1.ny1 /var/log/mongod-mmsconfig1-prod]$ grep "was very slow" mongod.log | grep "2015-01-17T04" | wc

0 0 0

[cnelson@mms-db-1.ny1 /var/log/mongod-mmsconfig1-prod]$ grep "was very slow" mongod.log | grep "2015-01-17T05" | wc

0 0 0

[cnelson@mms-db-1.ny1 /var/log/mongod-mmsconfig1-prod]$ grep "was very slow" mongod.log | grep "2015-01-17T06" | wc

0 0 0

[cnelson@mms-db-1.ny1 /var/log/mongod-mmsconfig1-prod]$ grep "was very slow" mongod.log | grep "2015-01-17T07" | wc

1 61 421

[cnelson@mms-db-1.ny1 /var/log/mongod-mmsconfig1-prod]$ grep "was very slow" mongod.log | grep "2015-01-17T08" | wc

3 183 1266

[cnelson@mms-db-1.ny1 /var/log/mongod-mmsconfig1-prod]$ grep "was very slow" mongod.log | grep "2015-01-17T09" | wc

12 732 5076

[cnelson@mms-db-1.ny1 /var/log/mongod-mmsconfig1-prod]$ grep "was very slow" mongod.log | grep "2015-01-17T10" | wc

53 3233 22402

[cnelson@mms-db-1.ny1 /var/log/mongod-mmsconfig1-prod]$ grep "was very slow" mongod.log | grep "2015-01-17T11" | wc

147 8967 62167

[cnelson@mms-db-1.ny1 /var/log/mongod-mmsconfig1-prod]$ grep "was very slow" mongod.log | grep "2015-01-17T12" | wc

154 9394 65086

[cnelson@mms-db-1.ny1 /var/log/mongod-mmsconfig1-prod]$ grep "was very slow" mongod.log | grep "2015-01-17T13" | wc

151 9211 63832

[cnelson@mms-db-1.ny1 /var/log/mongod-mmsconfig1-prod]$ grep "was very slow" mongod.log | grep "2015-01-17T14" | wc

142 8662 60027

[cnelson@mms-db-1.ny1 /var/log/mongod-mmsconfig1-prod]$ grep "was very slow" mongod.log | grep "2015-01-17T15" | wc

- is duplicated by

-

SERVER-17046 FASSERT 18750 in rsBackgroundSync

-

- Closed

-

-

SERVER-17055 secondary cannot keep up once oplog hit cap with wiredTiger

-

- Closed

-

-

SERVER-17074 Sharded Replicaset - replicas fall behind (3.0.0-rc6)

-

- Closed

-

- related to

-

SERVER-16868 Significant slowdown in capped collection performance when cap reached

-

- Closed

-

-

SERVER-17083 Background thread for oplog clean-up can timeout during repair (invariant failure)

-

- Closed

-

-

SERVER-17094 repairing local db with WiredTiger will deadlock with the oplog cleaning thread and crash

-

- Closed

-