-

Type:

Bug

-

Resolution: Duplicate

-

Priority:

Major - P3

-

None

-

Affects Version/s: 3.4.1

-

Component/s: Replication

-

None

-

ALL

-

None

-

None

-

None

-

None

-

None

-

None

-

None

We have a small three member replicaset running MongoDB 3.4.1:

- Primary. Physical local server, Windows Server 2012, 64 GB RAM, 6 cores. Hosted in Scandinavia.

- Secondary. Amazon EC2, Windows Server 2016, r4.2xlarge, 61 GB RAM, 8 vCPUs. Hosted in Germany.

- Arbiter. Tiny cloud based Linux instance.

The WiredTiger cache has its default size of around 50% of RAM, so the mongod process consumes around 32 GB in our case. Additionally, over time, MongoDB uses up all free memory via the filesystem cache (memory mapping). This is expected behavior AFAIK.

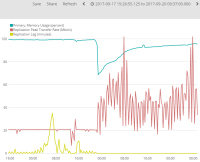

However, what we are seeing is that once the amount of available memory on the server drops below 1-4 % (by Windows' definition of "Available memory"), the replication speed from the primary to the secondary instance is being throttled / capped at just over 20 MBit/s. I.e., replication never goes above that speed, and if there is more data to replicate, it will queue up and result in replication lag.

This is not a pure bandwidth issue; for example while throttling is taking place, we can transfer data between the two servers over FTP at far more than 20 MBit/s.

To prove that low memory is causing this throttling, we ran a small script that allocated and freed around 10 GB memory on the server. Since there was almost no available memory, this memory was reallocated mainly from the filesystem cache, and in part form the mongod process. Immediately the throttling stopped, as shown in the attached screenshot, and replication occurred at full speed. This lasted for around 3 days until MongoDB had consumed all free memory through the filesystem cache, at which point replication was again throttled.

This is 100% reproducible, and in fact the workaround we are currently resorting to, to avoid replication lag.

We haven't found a way to configure the amount of memory used for memory mapping, and are currently thinking that this must be a bug within MongoDB? We haven't found anything useful in the logs explaining why throttling takes place, and tried looking for duplicates for this bug without success.

- duplicates

-

WT-2670 Inefficient I/O when read full DB (poor readahead)

-

- Closed

-