-

Type:

Improvement

-

Resolution: Fixed

-

Priority:

Major - P3

-

Affects Version/s: 3.6.5

-

Component/s: Sharding

-

Fully Compatible

-

v4.0, v3.6

-

Sharding 2018-07-16, Sharding 2018-12-17, Sharding 2018-12-31, Sharding 2019-01-14, Sharding 2019-01-28, Sharding 2019-02-11, Sharding 2019-02-25, Sharding 2019-03-11, Sharding 2019-03-25

-

(copied to CRM)

-

0

-

None

-

None

-

None

-

None

-

None

-

None

-

None

Original Summary

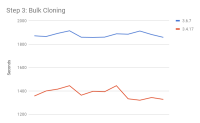

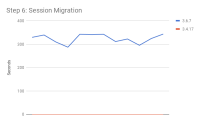

MongoDB 3.6 Balancer much slower than 3.4

Original Description

I have a test for balancer between 3.6 and 3.4, and I found balancing on 3.6 is much slower than 3.4.

I insert 100 million docs to 3.6 and 3.4, the single doc size is 2 kbytes.

Collection initial as below:

db.runCommand({shardCollection: "ycsb.test", key: {_id: "hashed"}, numInitialChunks: 6500})

Test Results:

- 3.4.15 with MMAPv1 engine:

- from 1 shard to 2 shards: use 41866 seconds, after balance, 3250 chunks on shard.

- from 2 sahrds to 4 shards: use 30630 seconds, after balance 1625 chunks on 1 shard.

- 3.6.5 with MMAPv1 engine:

- from 1 shard to 2 shards: use 90200 seconds, after balance, 3250 chunks on shard.

- from 2 sahrds to 4 shards: use 44679 seconds, after balance 1625 chunks on 1 shard.

- 3.4.15 with wiredTiger engine:

- from 1 shard to 2 shards: use 35635 seconds, after balance, 3250 chunks on shard.

- from 2 sahrds to 4 shards: use 10740 seconds, after balance 1625 chunks on 1 shard.

- 3.6.5 with wiredTiger engine:

- from 1 shard to 2 shards: use 49762 seconds, after balance, 3250 chunks on shard.

- from 2 sahrds to 4 shards: use 18961 seconds, after balance 1625 chunks on 1 shard.

MongoDB configuration for MMAPv1 engine:

security:

authorization: disabled

sharding:

clusterRole: shardsvr

replication:

replSetName: rs1

systemLog:

logAppend: true

destination: file

path: /home/adun/3.4/log/mongod.logprocessManagement:

fork: true

pidFilePath: /home/adun/3.4/log/mongod.pidnet:

port: 27017

bindIp: 127.0.0.1,192.168.10.31

maxIncomingConnections: 65536storage:

dbPath: /home/adun/3.4/data

directoryPerDB: true

engine: mmapv1

MongoDB configuration for wiredTiger engine:

security:

authorization: disabled

sharding:

clusterRole: shardsvr

replication:

replSetName: rs1

systemLog:

logAppend: true

destination: file

path: /home/adun/3.4/log/mongod.logprocessManagement:

fork: true

pidFilePath: /home/adun/3.4/log/mongod.pidnet:

port: 27017

bindIp: 127.0.0.1,192.168.10.31

maxIncomingConnections: 65536storage:

dbPath: /home/adun/3.4/data

directoryPerDB: true

engine: wiredTigerwiredTiger:

engineConfig:

cacheSizeGB: 32

directoryForIndexes: true

Other Questions:

- If we set numInitialChunks to a small value, such as 100, mongodb will create/split chunk by itself, but even if we insert the same data/same records number, 3.6 will create chunks abont 10% more than 3.4. (both MMAPv1 and wiredTiger)

- depends on

-

SERVER-38874 Add ability to observe entering critical section in ShardingMigrationCriticalSection

-

- Closed

-

- related to

-

SERVER-40187 Remove waitsForNewOplog response field from _getNextSessionMods

-

- Closed

-

- links to