-

Type:

Task

-

Resolution: Done

-

Priority:

Major - P3

-

None

-

Affects Version/s: 4.0.0

-

Component/s: Performance

-

None

-

None

-

None

-

None

-

None

-

None

-

None

-

None

For last 3 monthes our team has issues with MongoDB ChangeStream performance.

Numbers that we want to achieve - stable 15k documents/sec for 3 ChangeStream consumers over independent collections/databases.

Current performance numbers - about 7-8k for 1 ChangeStream consumer.

Question: How can we achieve desired performance numbers on current cluster setup?

MongoDB Cluster deployed on AWS and has following topology:

Shards: 6 shards with PSA arcitecture. Primary/Secondary - m5.xlarge nodes with EBS disks (2TB of disk space and 6k IOPS for each shard), arbiter - t2.medium node

Mongos: 3 m5.xlarge nodes, write load balanced across all of it

Config server: replica set of 3 m5.large nodes

Key points of our work:

- changing number of shards and proper collection sharding don't boost performance

- increasing instance type for shards and disk shape has low impact on performance

- monitoring for cluster don't show any kind of bottlenecks. CPU, memory, disk usage, networking values for shards and mongos are in norm

- increasing document size degrade ChangeStream performance. We pass _id field only

- increasing number of writes decrease ChangeStream performance: 8k+/sec writes - 8k/sec consume, 12k/sec writes - 5k/sec consume, 14k/sec writes - 3k/sec consume

- tuning MongoDB server side settings don't improve performance: decreasing waitForSecondaryBeforeNoopWriteMS, increasing of __ ShardingTaskExecutorPoolMaxConnecting and __ replWriterThreadCount

- tuning client side settings don't improve performance: changing of batch size, number of connections, filter for inserts and updates

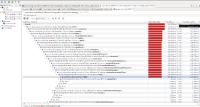

- profiling of client side show large waiting time on acquiring of ChangeStream notification (detailed info in attachments)