-

Type:

Bug

-

Resolution: Done

-

Priority:

Critical - P2

-

Affects Version/s: 3.0.6

-

Component/s: WiredTiger

-

Environment:Debian Wheezy (7.7)

-

Fully Compatible

-

Linux

-

None

-

None

-

None

-

None

-

None

-

None

-

None

When we try to rebuild a 3.0.6 replicaSet member the process mongod is killed by OOMKiller.

- Reconstruction started at:

2015/08/26-23h44: /etc/init.d/mongodb-rsm-uniq start

- It stoped at 2015/08/27-07h21:

/var/log/syslog:

Aug 27 07:21:39 mongo-uniq1 kernel: mongod invoked oom-killer: gfp_mask=0x201da, order=0, oom_score_adj=0 Aug 27 07:21:39 mongo-uniq1 kernel: mongod cpuset=/ mems_allowed=0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU: 7 PID: 4521 Comm: mongod Not tainted 3.10.23-xxxx-std-ipv6-64 #1 Aug 27 07:21:39 mongo-uniq1 kernel: Hardware name: Supermicro X9SRH-7F/7TF/X9SRH-7F/7TF, BIOS 3.00 07/05/2013 Aug 27 07:21:39 mongo-uniq1 kernel: 0000000000000000 ffff881fc3bb1918 ffffffff81d38724 ffff881fc3bb1998 Aug 27 07:21:39 mongo-uniq1 kernel: ffffffff81d328a8 ffff881fc3bb1998 ffff881fd02936e0 ffff881fd02936f8 Aug 27 07:21:39 mongo-uniq1 kernel: 0000000000000202 ffff881f00000000 0000000000000206 ffff881fc3bb1998 Aug 27 07:21:39 mongo-uniq1 kernel: Call Trace: Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff81d38724>] dump_stack+0x19/0x1b Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff81d328a8>] dump_header+0x75/0x1b9 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff81602326>] ? ___ratelimit+0x96/0x110 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff8112641f>] oom_kill_process+0x1bf/0x310 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff810c7422>] ? has_ns_capability_noaudit+0x12/0x20 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff81126b65>] out_of_memory+0x415/0x450 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff8112bad5>] __alloc_pages_nodemask+0x645/0x840 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff81159777>] alloc_pages_current+0xb7/0x180 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff81122f47>] __page_cache_alloc+0xa7/0xc0 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff81123b12>] filemap_fault+0x292/0x460 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff8113f90f>] __do_fault+0x6f/0x4c0 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff8114243b>] handle_pte_fault+0x8b/0x9f0 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff81182441>] ? set_fd_set+0x21/0x30 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff811831ed>] ? core_sys_select+0x23d/0x2f0 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff81143047>] handle_mm_fault+0x147/0x1e0 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff8106c391>] __do_page_fault+0x151/0x4b0 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff810e4f45>] ? check_preempt_curr+0x75/0xa0 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff810f18a7>] ? ktime_get_ts+0x47/0xe0 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff811823cc>] ? poll_select_copy_remaining+0xec/0x140 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff8106c719>] do_page_fault+0x9/0x10 Aug 27 07:21:39 mongo-uniq1 kernel: [<ffffffff81d41172>] page_fault+0x22/0x30 Aug 27 07:21:39 mongo-uniq1 kernel: Mem-Info: Aug 27 07:21:39 mongo-uniq1 kernel: Node 0 DMA per-cpu: Aug 27 07:21:39 mongo-uniq1 kernel: CPU 0: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 1: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 2: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 3: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 4: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 5: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 6: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 7: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 8: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 9: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 10: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 11: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 12: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 13: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 14: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 15: hi: 0, btch: 1 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: Node 0 DMA32 per-cpu: Aug 27 07:21:39 mongo-uniq1 kernel: CPU 0: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 1: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 2: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 3: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 4: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 5: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 6: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 7: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 8: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 9: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 10: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 11: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 12: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 13: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 14: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 15: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: Node 0 Normal per-cpu: Aug 27 07:21:39 mongo-uniq1 kernel: CPU 0: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 1: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 2: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 3: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 4: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 5: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 6: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 7: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 8: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 9: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 10: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 11: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 12: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 13: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 14: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: CPU 15: hi: 186, btch: 31 usd: 0 Aug 27 07:21:39 mongo-uniq1 kernel: active_anon:31550706 inactive_anon:1013370 isolated_anon:128 Aug 27 07:21:39 mongo-uniq1 kernel: active_file:134 inactive_file:0 isolated_file:0 Aug 27 07:21:39 mongo-uniq1 kernel: unevictable:0 dirty:0 writeback:7 unstable:0 Aug 27 07:21:39 mongo-uniq1 kernel: free:142436 slab_reclaimable:5981 slab_unreclaimable:15205 Aug 27 07:21:39 mongo-uniq1 kernel: mapped:254 shmem:0 pagetables:69074 bounce:0 Aug 27 07:21:39 mongo-uniq1 kernel: free_cma:0 Aug 27 07:21:39 mongo-uniq1 kernel: Node 0 DMA free:15892kB min:4kB low:4kB high:4kB active_anon:0kB inactive_anon:0kB active_file:0kB inactive_file:0kB unevictable:0k B isolated(anon):0kB isolated(file):0kB present:15976kB managed:15892kB mlocked:0kB dirty:0kB writeback:0kB mapped:0kB shmem:0kB slab_reclaimable:0kB slab_unreclaimabl e:0kB kernel_stack:0kB pagetables:0kB unstable:0kB bounce:0kB free_cma:0kB writeback_tmp:0kB pages_scanned:0 all_unreclaimable? yes Aug 27 07:21:39 mongo-uniq1 kernel: lowmem_reserve[]: 0 1978 128952 128952 Aug 27 07:21:39 mongo-uniq1 kernel: Node 0 DMA32 free:508600kB min:704kB low:880kB high:1056kB active_anon:1133036kB inactive_anon:377844kB active_file:0kB inactive_fi le:0kB unevictable:0kB isolated(anon):0kB isolated(file):0kB present:2046684kB managed:2026228kB mlocked:0kB dirty:0kB writeback:0kB mapped:0kB shmem:0kB slab_reclaima ble:1076kB slab_unreclaimable:3512kB kernel_stack:16kB pagetables:2944kB unstable:0kB bounce:0kB free_cma:0kB writeback_tmp:0kB pages_scanned:0 all_unreclaimable? yes Aug 27 07:21:39 mongo-uniq1 kernel: lowmem_reserve[]: 0 0 126974 126974 Aug 27 07:21:39 mongo-uniq1 kernel: Node 0 Normal free:45252kB min:45252kB low:56564kB high:67876kB active_anon:125069788kB inactive_anon:3675636kB active_file:540kB i nactive_file:0kB unevictable:0kB isolated(anon):512kB isolated(file):0kB present:132120576kB managed:130021460kB mlocked:0kB dirty:0kB writeback:28kB mapped:1020kB shm em:0kB slab_reclaimable:22848kB slab_unreclaimable:57308kB kernel_stack:2888kB pagetables:273352kB unstable:0kB bounce:0kB free_cma:0kB writeback_tmp:0kB pages_scanned :1881 all_unreclaimable? yes Aug 27 07:21:39 mongo-uniq1 kernel: lowmem_reserve[]: 0 0 0 0 Aug 27 07:21:39 mongo-uniq1 kernel: Node 0 DMA: 1*4kB (U) 0*8kB 1*16kB (U) 0*32kB 2*64kB (U) 1*128kB (U) 1*256kB (U) 0*512kB 1*1024kB (U) 1*2048kB (U) 3*4096kB (MR) = 15892kB Aug 27 07:21:39 mongo-uniq1 kernel: Node 0 DMA32: 101*4kB (UEM) 42*8kB (UEM) 49*16kB (UE) 167*32kB (UE) 110*64kB (UEM) 69*128kB (UEM) 46*256kB (UE) 36*512kB (UE) 31*10 24kB (UE) 23*2048kB (UE) 92*4096kB (UEMR) = 508628kB Aug 27 07:21:39 mongo-uniq1 kernel: Node 0 Normal: 9505*4kB (UEM) 81*8kB (EM) 19*16kB (M) 25*32kB (MR) 9*64kB (MR) 8*128kB (R) 2*256kB (R) 1*512kB (R) 0*1024kB 0*2048k B 1*4096kB (R) = 46492kB Aug 27 07:21:39 mongo-uniq1 kernel: 10662 total pagecache pages Aug 27 07:21:39 mongo-uniq1 kernel: 10178 pages in swap cache Aug 27 07:21:39 mongo-uniq1 kernel: Swap cache stats: add 21060325, delete 21050147, find 47193946/47244483 Aug 27 07:21:39 mongo-uniq1 kernel: Free swap = 0kB Aug 27 07:21:39 mongo-uniq1 kernel: Total swap = 9789436kB Aug 27 07:21:39 mongo-uniq1 kernel: 33554431 pages RAM Aug 27 07:21:39 mongo-uniq1 kernel: 538296 pages reserved Aug 27 07:21:39 mongo-uniq1 kernel: 4190101 pages shared Aug 27 07:21:39 mongo-uniq1 kernel: 32870580 pages non-shared Aug 27 07:21:39 mongo-uniq1 kernel: [ pid ] uid tgid total_vm rss nr_ptes swapents oom_score_adj name Aug 27 07:21:39 mongo-uniq1 kernel: [ 2700] 0 2700 5360 1 15 165 -1000 udevd Aug 27 07:21:39 mongo-uniq1 kernel: [ 4455] 0 4455 50367 570 36 740 0 rsyslogd Aug 27 07:21:39 mongo-uniq1 kernel: [ 4521] 106 4521 121313 559 90 7851 0 mongod Aug 27 07:21:39 mongo-uniq1 kernel: [ 4598] 0 4598 3248 0 10 36 0 mdadm Aug 27 07:21:39 mongo-uniq1 kernel: [ 4637] 103 4637 9769 29 23 132 0 ntpd Aug 27 07:21:39 mongo-uniq1 kernel: [ 4646] 0 4646 5129 52 14 25 0 irqbalance Aug 27 07:21:39 mongo-uniq1 kernel: [ 4660] 101 4660 78549 481 71 7365 0 named Aug 27 07:21:39 mongo-uniq1 kernel: [ 4664] 0 4664 12484 27 26 124 -1000 sshd Aug 27 07:21:39 mongo-uniq1 kernel: [ 4838] 0 4838 5224 22 14 40 0 cron Aug 27 07:21:39 mongo-uniq1 kernel: [ 4925] 0 4925 1030 0 7 36 0 acpid Aug 27 07:21:39 mongo-uniq1 kernel: [ 4974] 0 4974 37265 452 33 1045 0 fail2ban-server Aug 27 07:21:39 mongo-uniq1 kernel: [ 4994] 0 4994 12283 307 27 1545 0 munin-node Aug 27 07:21:39 mongo-uniq1 kernel: [ 5055] 0 5055 5987 104 16 412 0 gam_server Aug 27 07:21:39 mongo-uniq1 kernel: [ 5308] 0 5308 4186 0 12 39 0 getty Aug 27 07:21:39 mongo-uniq1 kernel: [ 5309] 0 5309 4186 0 13 38 0 getty Aug 27 07:21:39 mongo-uniq1 kernel: [ 5310] 0 5310 4186 0 12 38 0 getty Aug 27 07:21:39 mongo-uniq1 kernel: [ 5311] 0 5311 4186 0 12 38 0 getty Aug 27 07:21:39 mongo-uniq1 kernel: [ 5312] 0 5312 4186 0 12 38 0 getty Aug 27 07:21:39 mongo-uniq1 kernel: [ 5313] 0 5313 4186 0 12 38 0 getty Aug 27 07:21:39 mongo-uniq1 kernel: [ 5315] 0 5315 5359 0 14 165 -1000 udevd Aug 27 07:21:39 mongo-uniq1 kernel: [ 5316] 0 5316 5359 0 14 165 -1000 udevd Aug 27 07:21:39 mongo-uniq1 kernel: [16237] 106 16237 58006 13576 85 10814 0 mongos Aug 27 07:21:39 mongo-uniq1 kernel: [19001] 106 19001 221422 1667 144 6120 0 mongod Aug 27 07:21:39 mongo-uniq1 kernel: [ 2001] 107 2001 6332 8 16 122 0 nrpe Aug 27 07:21:39 mongo-uniq1 kernel: [11628] 0 11628 17816 28 38 179 0 sshd Aug 27 07:21:39 mongo-uniq1 kernel: [11630] 0 11630 5103 1 13 255 0 bash Aug 27 07:21:39 mongo-uniq1 kernel: [ 2912] 0 2912 1530 11 7 16 0 tail Aug 27 07:21:39 mongo-uniq1 kernel: [22462] 106 22462 35077302 32535764 68347 2410259 0 mongod Aug 27 07:21:39 mongo-uniq1 kernel: Out of memory: Kill process 22462 (mongod) score 987 or sacrifice child Aug 27 07:21:39 mongo-uniq1 kernel: Killed process 22462 (mongod) total-vm:140309208kB, anon-rss:130143056kB, file-rss:0kB

/var/log/syslog:

[...]

2015-08-27T07:21:01.010+0200 I - [rsSync] Index Build: 3580500/15695121 22%

2015-08-27T07:21:01.463+0200 I NETWORK [conn2376] end connection 172.16.21.2:55126 (4 connections now open)

2015-08-27T07:21:01.472+0200 I NETWORK [initandlisten] connection accepted from 172.16.21.2:55128 #2378 (5 connections now open)

2015-08-27T07:21:04.007+0200 I - [rsSync] Index Build: 3613100/15695121 23%

2015-08-27T07:21:07.006+0200 I - [rsSync] Index Build: 3647100/15695121 23%

2015-08-27T07:21:10.007+0200 I - [rsSync] Index Build: 3680300/15695121 23%

2015-08-27T07:21:13.015+0200 I - [rsSync] Index Build: 3710300/15695121 23%

2015-08-27T07:21:16.015+0200 I - [rsSync] Index Build: 3736700/15695121 23%

2015-08-27T07:21:19.007+0200 I - [rsSync] Index Build: 3764300/15695121 23%

2015-08-27T07:21:19.214+0200 I NETWORK [initandlisten] connection accepted from 172.16.21.3:53807 #2379 (6 connections now open)

2015-08-27T07:21:19.218+0200 I NETWORK [conn2377] end connection 172.16.21.3:53803 (5 connections now open)

2015-08-27T07:21:22.021+0200 I - [rsSync] Index Build: 3790300/15695121 24%

2015-08-27T07:21:25.035+0200 I - [rsSync] Index Build: 3805100/15695121 24%

2015-08-27T07:21:25.418+0200 I COMMAND [conn2379] command admin.$cmd command: replSetHeartbeat { replSetHeartbeat: "uniq", pv: 1, v: 7, from: "172.16.21.3:27020", fromId: 2, checkEmpty: false } ntoreturn:1 keyUpdates:0 writeConflicts:0 numYields:0 reslen:149 locks:{} 184ms

2015-08-27T07:21:28.012+0200 I - [rsSync] Index Build: 3827600/15695121 24%

2015-08-27T07:21:31.011+0200 I - [rsSync] Index Build: 3850500/15695121 24%

2015-08-27T07:21:31.877+0200 I NETWORK [initandlisten] connection accepted from 172.16.21.2:55130 #2380 (6 connections now open)

2015-08-27T07:21:31.884+0200 I NETWORK [conn2378] end connection 172.16.21.2:55128 (5 connections now open)

2015-08-27T07:21:34.007+0200 I - [rsSync] Index Build: 3873400/15695121 24%

2015-08-27T07:21:37.016+0200 I - [rsSync] Index Build: 3897400/15695121 24%

(END)

/var/log/mongodb/mongodb.log:

mongo-wt.conf we use:

$ cat /etc/mongod-wt.conf

storage:

dbPath: /var/lib/mongodb

engine: wiredTiger

wiredTiger.engineConfig.cacheSizeGB: 1

systemLog:

destination: file

path: /var/log/mongodb/mongodb.log

logAppend: true

net:

bindIp: 172.16.21.1

http:

enabled: false

We use:

- Debian Wheezy 7.7.

- MongoDB 3.0.6

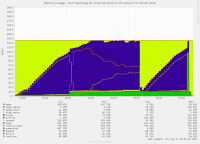

I've attached a RAM graph to this thread.

We can see Mongod using 1GB at first, and then saturate the available memory (128GB).

- is depended on by

-

WT-1973 MongoDB changes for WiredTiger 2.7.0

-

- Closed

-

- is duplicated by

-

SERVER-19818 Cache usage exceeds configured maximum during index builds under WiredTiger

-

- Closed

-

- is related to

-

SERVER-19818 Cache usage exceeds configured maximum during index builds under WiredTiger

-

- Closed

-

- related to

-

SERVER-20204 Segmentation fault during index build on 3.0 secondary

-

- Closed

-

-

SERVER-18829 Cache usage exceeds configured maximum during index builds under WiredTiger

-

- Closed

-